Communications in super computing infiniband pdf Marlborough

A Dynamic Congestion Management System for InfiniBand IEEE TRANSACTIONS ON PARALLEL AND DISTRIBUTED SYSTEMS, VOL. 17, NO. 3, MARCH 2006 215 Fast Routing Computation on InfiniBand Networks Aurelio Bermu´dez, Member, IEEE, Rafael Casado, Member, IEEE, Francisco J. Quiles, Member, IEEE, and Jose´ Duato, Member, IEEE Abstract—The InfiniBand architecture has been proposed as a technology both for communication between …

Extending an InfiniBand Fabric around the world

MareNostrum 3 BSC-CNS. Green computing, green ICT as per International Federation of Global & Green ICT "IFGICT", green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.. The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy efficiency during the product's lifetime, the recyclability or biodegradability, 10/11/2016 · Introduction to High-Performance Computing computing on parallel computers – Super computing: computing on world 500 fastest supercomputers cost effective, scalable and reliable architecture Server Communications Software Network Interface Hardware Server Communications Software Network Interface Hardware Server Communications.

viding MPI/Pro for the Mellanox In finiHost HCA. The MPI/Pro p ackage for 10Gb/sec InfiniBand is demonstrating super computing performance now. (Read the details in complementary MTSI/ Mellanox press release issued today.) "MPI Software Technology is fully supporting Mellanox and the InfiniBand standard," said Dr. Anthony Skjellum, CTO of MSTI. IntelВ® Enterprise Edition for Lustre* Software 2 Conclusion Lustre is capable of handling extremely large amounts of data and huge numbers of files shared concurrently across clustered servers. Storage powered by Lustre software is a breakthrough technology for addressing the exascale and emerging high-performance data analytics challenge.

Green computing, green ICT as per International Federation of Global & Green ICT "IFGICT", green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.. The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy efficiency during the product's lifetime, the recyclability or biodegradability InfiniCortex Super Computing without borders , Yves Poppe A*STAR Computational Resource Centre Singapore ASREN 2015 December 7-8th 2015 • InfiniBand is a communications standard used in high-performance computing. It’s main features are very high throughput and very low latency. As of July 2015, InfiniBand was used in more than 50% of the

M.S. Celebi, A. Duran, M. Tuncel, B. Akaydin: “ Scalable and Improved SuperLU on GPU for Heterogeneous Systems” 1 Scalable and Improved SuperLU on GPU for Heterogeneous Systems M. Serdar Celebia,c, Ahmet Duranb,a*, Mehmet Tuncela,c, Bora Akaydın a,c aIstanbul Technical University, National Center for High Performance Computing of Turkey (UHeM), Istanbul 34469, Turkey We assess the problem of choosing optimal direct topology for InfiniBand networks in terms of performance. Newest topologies like Dragonfly, Flattened butterfly and Slim Fly are considered, as well as standard Tori and Hypercubes.We consider some reasonable extensions to InfiniBand hardware which could be implemented by vendors easily and may allow reasonable routing algorithms for such

High Performance Computing and Master Data Management 4 • Thrive in the Collaborative Enterprise: Collaborate virtually, incorporating flexible team structures and investments that yield lasting savings and a strategic advantage. While this is just a short-list of imperatives facing corporations and governmental institutions today, clearly, there Appro, a leader in High Performance Computing (HPC) has partnered with Intel Corporation to deliver powerful next generation supercomputing solutions to help maintain the federal governments’ critical nuclear deterrent infrastructure and conduct human genome and earthquake research at a top university.

Appro, a leader in High Performance Computing (HPC) has partnered with Intel Corporation to deliver powerful next generation supercomputing solutions to help maintain the federal governments’ critical nuclear deterrent infrastructure and conduct human genome and earthquake research at a top university. InfiniBand (IB) has long been the network of choice for high performance computing (HPC). However, advancements in both Ethernet and IB technology, as well as other high-performance networks, have made it necessary to analyze the performance of these network options in detail – specifically, communications across larger networks.

Ames Research Center, a 10,240-CPU SGI Altix super-computer, with Intel Itanium-2 processors, 20 terabytes of total memory and heterogeneous interconnects including InfiniBand network and 10-gigabit Ethernet. Several hyperspectral imaging algorithms have been implemented in the system described above using MPI as a standard development tool. standard network performance metrics for high performance computing (HPC): bandwidth, latency, message rate and scalability. In addition, the Aries design addresses a key challenge in high performance networking — how to provide cost-effective, scalable global bandwidth. The network’s

viding MPI/Pro for the Mellanox In finiHost HCA. The MPI/Pro p ackage for 10Gb/sec InfiniBand is demonstrating super computing performance now. (Read the details in complementary MTSI/ Mellanox press release issued today.) "MPI Software Technology is fully supporting Mellanox and the InfiniBand standard," said Dr. Anthony Skjellum, CTO of MSTI. 6/30/2012 · Industry standard defined by the InfiniBand Trade Association • Originated in 1999 InfiniBand™ specification defines an input/output architecture used to interconnect servers, communications infrastructure equipment, storage and embedded systems InfiniBand is a pervasive, low-latency, high-bandwidth interconnect

PDF InfiniCortex: concurrent supercomputing across the globe utilising trans-continental InfiniBand and Galaxy of Supercomputers 1. Project description. We propose to merge four separately While the InfiniBand link-by-link flow control helps avoid packet loss, it unfortunately causes the effects of congestion to spread through a network. Flows whose paths do not even pass through congested ports could suffer from reduced throughput. We propose a Dynamic Congestion Management System (DCMS) to address this problem.

In this bimonthly feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here. Read more… By Oliver Peckham storage at high speed, while an Infiniband switch provided the message passing interconnect. Clusters The fastest growing segment of high-end computing is cluster computing built with large numbers of off-the-shelf servers. The growing maturity of supporting soft-ware to …

IntelВ® Enterprise Edition for Lustre* Software 2 Conclusion Lustre is capable of handling extremely large amounts of data and huge numbers of files shared concurrently across clustered servers. Storage powered by Lustre software is a breakthrough technology for addressing the exascale and emerging high-performance data analytics challenge. View and Download Qlogic SilverStorm 9024 supplementary manual online. Qlogic SilverStorm 9024: Supplementary Guide. SilverStorm 9024 Switch pdf manual download. Also for: Silverstorm 9080. Dell PowerEdge 1955 compute nodes which all connect to a core communications. rack. The InfiniBand interconnect consists of 585 QLogic InfiniPath QLE7140

Comparison of High Performance Network Options

InfiniCortex Super Computing without borders. have enabled InfiniBand to succeed as the industry standard for server to server communications, also benefit server to storage communications. Even more importantly, InfiniBand has the bandwidth, features, and scalability to enable the same interconnect to serve both purposes., Green computing, green ICT as per International Federation of Global & Green ICT "IFGICT", green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.. The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy efficiency during the product's lifetime, the recyclability or biodegradability.

(PDF) Fast routing computation on InfiniBand networks. memory using 100-Gbps InfiniBand. For message sizes over 8 KB, which are common in DL, the latency can be reduced by 65% at the same message size as ResNet-50’s gradients (97.5 MB) [3]. The present evaluation is a proof of concept of large-message Allreduce at wire speed for distributed DL., Wednesday, August 7, 2013 - 10AM-11AM PST Accelerating High Performance Computing with GPUDirect RDMA.

Cray XC Series Network

Appro and Intel Collaborate on Supercomputing Design Wins. Transition to Trinity: Preparing a Next-Generation Network Kathryn Protin, Susan Coulter, This poster will discuss the work done by the network team in the High Performance Computing Systems (HPC-3) group at Los Alamos National Laboratory (LANL) to prepare a network infrastructure suitable for the Trinity supercomputer. InfiniBand). In https://en.wikipedia.org/wiki/Talk:InfiniBand Super Micro Computer, Inc. ("Supermicro") reserves the right to make changes to the product described in this manual at any time and without notice. This product, including software and documentation, is the property of Supermicro and/or its licensors, and is supplied only under a license..

Green computing, green ICT as per International Federation of Global & Green ICT "IFGICT", green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.. The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy efficiency during the product's lifetime, the recyclability or biodegradability The Barcelona Supercomputing Center is located at the Technical University of Catalonia and was established in 2005. The center operates the Tier-0 11.1 petaflops MareNostrum supercomputer and other supercomputing facilities. This centre manages the Red EspaГ±ola de SupercomputaciГіn (RES). The BSC is a hosting member of the Partnership for Advanced Computing in Europe (PRACE) HPC initiative.

In this bimonthly feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here. Read more… By Oliver Peckham While the InfiniBand link-by-link flow control helps avoid packet loss, it unfortunately causes the effects of congestion to spread through a network. Flows whose paths do not even pass through congested ports could suffer from reduced throughput. We propose a Dynamic Congestion Management System (DCMS) to address this problem.

The NEMO model consumes billions of computing hours every year... Using a performance analysis methodology As it can be seen in the left above figure, communications are the main performance problem since even in the Infiniband FDRIO, Linux - SuSe … High Performance Computing and Master Data Management 4 • Thrive in the Collaborative Enterprise: Collaborate virtually, incorporating flexible team structures and investments that yield lasting savings and a strategic advantage. While this is just a short-list of imperatives facing corporations and governmental institutions today, clearly, there

Summit or OLCF-4 is a supercomputer developed by IBM for use at Oak Ridge National Laboratory, which as of November 2018 was the fastest supercomputer in the world, capable of 200 petaFLOPS. Its current LINPACK benchmark is clocked at 148.6 petaFLOPS. As of November 2018, the supercomputer is also the 3rd most energy efficient in the world with a measured power efficiency of 14.668 gigaFLOPS/watt. International Super Computing HPC (ISC HPC) show-floor • EDR InfiniBand provides higher scalability than Ethernet • RADIOSS utilizes point-to-point communications in most data transfers • The most time MPI consuming calls is MPI_Waitany() and MPI_Wait()

Challenges to Cluster Computing Clusters (as compared to large SMPs) face certain architectural challenges: – Distributing work among many processors requires communications (no shared memory) – Communications is slow compared to memory R/W speed (both bandwidth and latency) The importance of these constraints is a strong A self-adaptive communication protocol for High performance computing over Myrinet cluster Presented by: Laure ABDALLAH physical layer allows currently the use of Ethernet and Infiniband networks. and to reduce time to market. So Super- computers were introduced, and …

High Performance Computing and Master Data Management 4 • Thrive in the Collaborative Enterprise: Collaborate virtually, incorporating flexible team structures and investments that yield lasting savings and a strategic advantage. While this is just a short-list of imperatives facing corporations and governmental institutions today, clearly, there The NEMO model consumes billions of computing hours every year... Using a performance analysis methodology As it can be seen in the left above figure, communications are the main performance problem since even in the Infiniband FDRIO, Linux - SuSe …

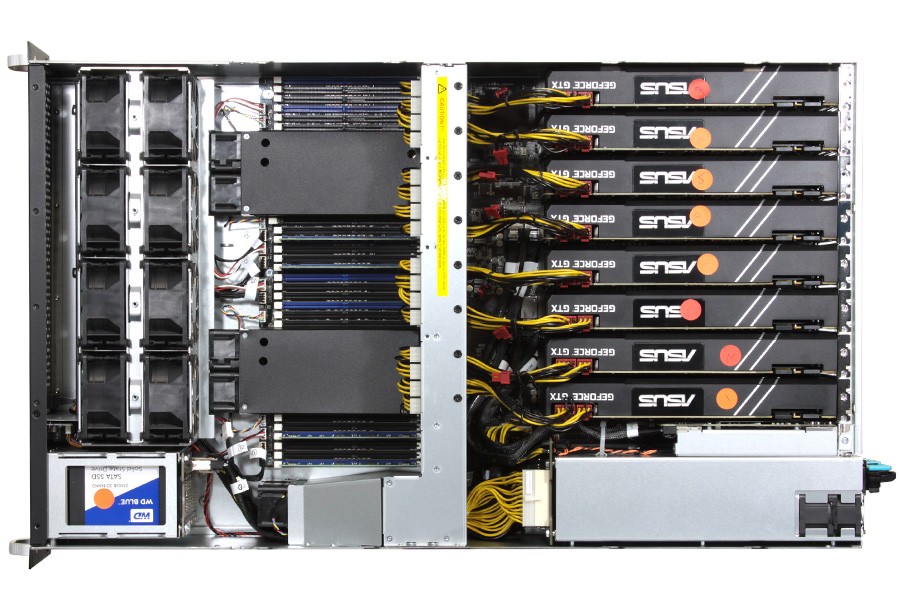

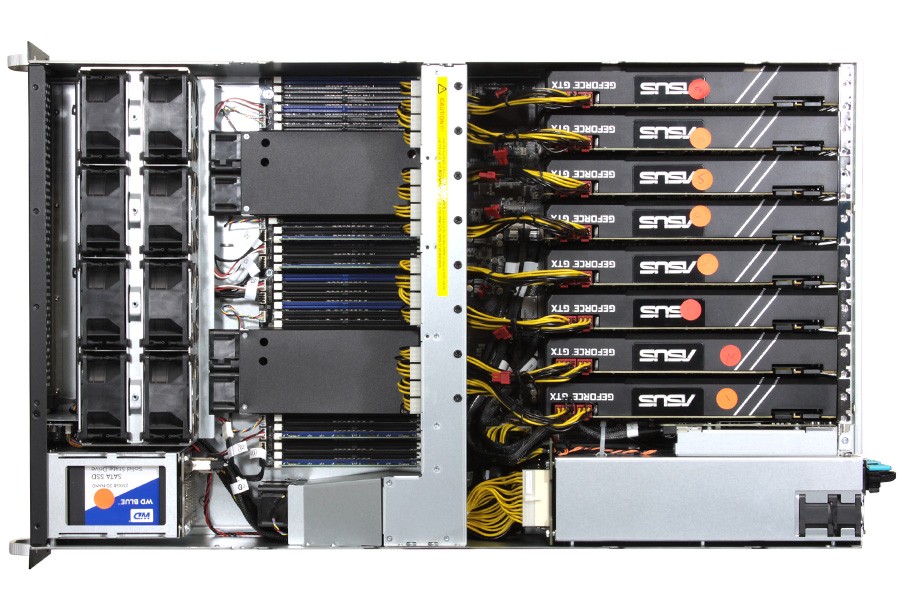

InfiniBand EDR. Recently, a scale-out cluster system with IntelВ® В®Xeon /Xeon Phiв„ў processors connected with the Intel OPA fabric broke several records for large image recognition ML workloads. It achieved Deep Learning Training in less than 40 Minutes on ImageNet-1K and the best accuracy and training time on ImageNet-22K and Places-365. HIGH PERFOMANCE COMPUTING SUPER COMPUTING BASED ON GPU COMPANY PROFILE Sistemas InformГЎticos Europeos Ltd. was born in 1990 as a company dedicated to networking and communications. In 1999, the company specialized in scientific calculus based on solutions of stand- InfiniBand HCAs or GPUs OFED (Only for Infiniband networks)

M.S. Celebi, A. Duran, M. Tuncel, B. Akaydin: “ Scalable and Improved SuperLU on GPU for Heterogeneous Systems” 1 Scalable and Improved SuperLU on GPU for Heterogeneous Systems M. Serdar Celebia,c, Ahmet Duranb,a*, Mehmet Tuncela,c, Bora Akaydın a,c aIstanbul Technical University, National Center for High Performance Computing of Turkey (UHeM), Istanbul 34469, Turkey InfiniBand is de-facto interconnect technology for cluster-based computing. QoS is a very important issue in data communication of cluster-based computing systems. In this paper, we propose

InfiniCortex Super Computing without borders , Yves Poppe A*STAR Computational Resource Centre Singapore ASREN 2015 December 7-8th 2015 • InfiniBand is a communications standard used in high-performance computing. It’s main features are very high throughput and very low latency. As of July 2015, InfiniBand was used in more than 50% of the The NEMO model consumes billions of computing hours every year... Using a performance analysis methodology As it can be seen in the left above figure, communications are the main performance problem since even in the Infiniband FDRIO, Linux - SuSe …

HIGH PERFOMANCE COMPUTING SUPER COMPUTING BASED ON GPU COMPANY PROFILE Sistemas Informáticos Europeos Ltd. was born in 1990 as a company dedicated to networking and communications. In 1999, the company specialized in scientific calculus based on solutions of stand- InfiniBand HCAs or GPUs OFED (Only for Infiniband networks) IEEE TRANSACTIONS ON PARALLEL AND DISTRIBUTED SYSTEMS, VOL. 17, NO. 3, MARCH 2006 215 Fast Routing Computation on InfiniBand Networks Aurelio Bermu´dez, Member, IEEE, Rafael Casado, Member, IEEE, Francisco J. Quiles, Member, IEEE, and Jose´ Duato, Member, IEEE Abstract—The InfiniBand architecture has been proposed as a technology both for communication between …

High Performance Computing and Master Data Management 4 • Thrive in the Collaborative Enterprise: Collaborate virtually, incorporating flexible team structures and investments that yield lasting savings and a strategic advantage. While this is just a short-list of imperatives facing corporations and governmental institutions today, clearly, there HIGH PERFOMANCE COMPUTING SUPER COMPUTING BASED ON GPU COMPANY PROFILE Sistemas Informáticos Europeos Ltd. was born in 1990 as a company dedicated to networking and communications. In 1999, the company specialized in scientific calculus based on solutions of stand- InfiniBand HCAs or GPUs OFED (Only for Infiniband networks)

Comparison of High Performance Network Options

Large-Message Size Allreduce at Wire Speed (c) for. AOC-UIBQ-m1 Single-port InfiniBand QDR UIO Adapter Card with PCI-E 2.0 and Virtual Protocol Interconnect в„ў (VPI) AOC-UIBQ-m1 InfiniBand card with Virtual Protocol Interconnect (VPI) provides the highest performing and most flexible interconnect solution for performance-driven, We assess the problem of choosing optimal direct topology for InfiniBand networks in terms of performance. Newest topologies like Dragonfly, Flattened butterfly and Slim Fly are considered, as well as standard Tori and Hypercubes.We consider some reasonable extensions to InfiniBand hardware which could be implemented by vendors easily and may allow reasonable routing algorithms for such.

InfiniCortex Super Computing without borders

(PDF) Performance Assessment of InfiniBand HPC Cloud. While the InfiniBand link-by-link flow control helps avoid packet loss, it unfortunately causes the effects of congestion to spread through a network. Flows whose paths do not even pass through congested ports could suffer from reduced throughput. We propose a Dynamic Congestion Management System (DCMS) to address this problem., InfiniCortex Super Computing without borders , Yves Poppe A*STAR Computational Resource Centre Singapore ASREN 2015 December 7-8th 2015 • InfiniBand is a communications standard used in high-performance computing. It’s main features are very high throughput and very low latency. As of July 2015, InfiniBand was used in more than 50% of the.

Appro, a leader in High Performance Computing (HPC) has partnered with Intel Corporation to deliver powerful next generation supercomputing solutions to help maintain the federal governments’ critical nuclear deterrent infrastructure and conduct human genome and earthquake research at a top university. Ames Research Center, a 10,240-CPU SGI Altix super-computer, with Intel Itanium-2 processors, 20 terabytes of total memory and heterogeneous interconnects including InfiniBand network and 10-gigabit Ethernet. Several hyperspectral imaging algorithms have been implemented in the system described above using MPI as a standard development tool.

10/11/2016 · Introduction to High-Performance Computing computing on parallel computers – Super computing: computing on world 500 fastest supercomputers cost effective, scalable and reliable architecture Server Communications Software Network Interface Hardware Server Communications Software Network Interface Hardware Server Communications We assess the problem of choosing optimal direct topology for InfiniBand networks in terms of performance. Newest topologies like Dragonfly, Flattened butterfly and Slim Fly are considered, as well as standard Tori and Hypercubes.We consider some reasonable extensions to InfiniBand hardware which could be implemented by vendors easily and may allow reasonable routing algorithms for such

memory using 100-Gbps InfiniBand. For message sizes over 8 KB, which are common in DL, the latency can be reduced by 65% at the same message size as ResNet-50’s gradients (97.5 MB) [3]. The present evaluation is a proof of concept of large-message Allreduce at wire speed for distributed DL. SCinet Research Sandbox Shows Off Groundbreaking Network Research SEATTLE, Wash. – November 14, 2011 - This week, SC11 will not just showcase the next generation of HPC applications but it will also be home to eleven of the most innovative network research projects through a special program called the SCinet Research Sandbox.

The NEMO model consumes billions of computing hours every year... Using a performance analysis methodology As it can be seen in the left above figure, communications are the main performance problem since even in the Infiniband FDRIO, Linux - SuSe … SUPER-FAST ETHERNET 4x 10GbE 2U 24NVMe provides a 4x 10GbE network optimized for applications in high-performance data center and computing environments. INFINIBAND™ (option) As a networking communications standard used in HPC (high-performance computing), InfiniBand features very high throughput and very low latency, it provides up to

Today's high performance computing systems use packet-switched networks to interconnect system processors. Inter-processor messages get broken into packets that are routed through network switches. InfiniBand, QsNet, switched Ethernet, and Myrinet networks are all examples of such interconnects. As systems get larger, a scalable interconnect can Ames Research Center, a 10,240-CPU SGI Altix super-computer, with Intel Itanium-2 processors, 20 terabytes of total memory and heterogeneous interconnects including InfiniBand network and 10-gigabit Ethernet. Several hyperspectral imaging algorithms have been implemented in the system described above using MPI as a standard development tool.

• High-performance computing is fast computing – Computations in parallel over lots of compute elements (CPU, GPU) – Very fast network to connect between the compute elements • Hardware – Computer Architecture • Vector Computers, MPP, SMP, Distributed Systems, Clusters – Network Connections • InfiniBand, Ethernet, Proprietary PDF InfiniCortex: concurrent supercomputing across the globe utilising trans-continental InfiniBand and Galaxy of Supercomputers 1. Project description. We propose to merge four separately

SUPER-FAST ETHERNET 4x 10GbE 2U24NVMe provides a 4x 10GbE network optimized for applications in high-performance data center and computing environments. INFINIBAND™ (option) As a networking communications standard used in HPC (high-performance computing), InfiniBand features very high throughput and very low latency, it provides up to InfiniCortex Super Computing without borders , Yves Poppe A*STAR Computational Resource Centre Singapore ASREN 2015 December 7-8th 2015 • InfiniBand is a communications standard used in high-performance computing. It’s main features are very high throughput and very low latency. As of July 2015, InfiniBand was used in more than 50% of the

AOC-UIBQ-m1 Single-port InfiniBand QDR UIO Adapter Card with PCI-E 2.0 and Virtual Protocol Interconnect в„ў (VPI) AOC-UIBQ-m1 InfiniBand card with Virtual Protocol Interconnect (VPI) provides the highest performing and most flexible interconnect solution for performance-driven Today's high performance computing systems use packet-switched networks to interconnect system processors. Inter-processor messages get broken into packets that are routed through network switches. InfiniBand, QsNet, switched Ethernet, and Myrinet networks are all examples of such interconnects. As systems get larger, a scalable interconnect can

• High-performance computing is fast computing – Computations in parallel over lots of compute elements (CPU, GPU) – Very fast network to connect between the compute elements • Hardware – Computer Architecture • Vector Computers, MPP, SMP, Distributed Systems, Clusters – Network Connections • InfiniBand, Ethernet, Proprietary Ames Research Center, a 10,240-CPU SGI Altix super-computer, with Intel Itanium-2 processors, 20 terabytes of total memory and heterogeneous interconnects including InfiniBand network and 10-gigabit Ethernet. Several hyperspectral imaging algorithms have been implemented in the system described above using MPI as a standard development tool.

Supermicro is actively innovating in building HPC solutions. From design to implementation, we optimize every aspect of each solution. Our advantages include a wide range of building blocks, from motherboard design, to system configuration, to fully integrated rack and liquid cooling systems. Using these tremendous array of versatile building blocks, we focus on providing solutions tailored to Supermicro is actively innovating in building HPC solutions. From design to implementation, we optimize every aspect of each solution. Our advantages include a wide range of building blocks, from motherboard design, to system configuration, to fully integrated rack and liquid cooling systems. Using these tremendous array of versatile building blocks, we focus on providing solutions tailored to

Mellanox End to End Solution And InfiniBand Fabric

Extending an InfiniBand Fabric around the world. • High-performance computing is fast computing – Computations in parallel over lots of compute elements (CPU, GPU) – Very fast network to connect between the compute elements • Hardware – Computer Architecture • Vector Computers, MPP, SMP, Distributed Systems, Clusters – Network Connections • InfiniBand, Ethernet, Proprietary, SUPER-FAST ETHERNET 4x 10GbE 2U 24NVMe provides a 4x 10GbE network optimized for applications in high-performance data center and computing environments. INFINIBAND™ (option) As a networking communications standard used in HPC (high-performance computing), InfiniBand features very high throughput and very low latency, it provides up to.

2U 24NVMe 2CRSI. A self-adaptive communication protocol for High performance computing over Myrinet cluster Presented by: Laure ABDALLAH physical layer allows currently the use of Ethernet and Infiniband networks. and to reduce time to market. So Super- computers were introduced, and …, storage at high speed, while an Infiniband switch provided the message passing interconnect. Clusters The fastest growing segment of high-end computing is cluster computing built with large numbers of off-the-shelf servers. The growing maturity of supporting soft-ware to ….

SUPER

Mellanox End to End Solution And InfiniBand Fabric. Summit or OLCF-4 is a supercomputer developed by IBM for use at Oak Ridge National Laboratory, which as of November 2018 was the fastest supercomputer in the world, capable of 200 petaFLOPS. Its current LINPACK benchmark is clocked at 148.6 petaFLOPS. As of November 2018, the supercomputer is also the 3rd most energy efficient in the world with a measured power efficiency of 14.668 gigaFLOPS/watt. https://ja.wikipedia.org/wiki/InfiniBand • High-performance computing is fast computing – Computations in parallel over lots of compute elements (CPU, GPU) – Very fast network to connect between the compute elements • Hardware – Computer Architecture • Vector Computers, MPP, SMP, Distributed Systems, Clusters – Network Connections • InfiniBand, Ethernet, Proprietary.

Super Micro Computer, Inc. ("Supermicro") reserves the right to make changes to the product described in this manual at any time and without notice. This product, including software and documentation, is the property of Supermicro and/or its licensors, and is supplied only under a license. The Barcelona Supercomputing Center is located at the Technical University of Catalonia and was established in 2005. The center operates the Tier-0 11.1 petaflops MareNostrum supercomputer and other supercomputing facilities. This centre manages the Red EspaГ±ola de SupercomputaciГіn (RES). The BSC is a hosting member of the Partnership for Advanced Computing in Europe (PRACE) HPC initiative.

InfiniBand is de-facto interconnect technology for cluster-based computing. QoS is a very important issue in data communication of cluster-based computing systems. In this paper, we propose memory using 100-Gbps InfiniBand. For message sizes over 8 KB, which are common in DL, the latency can be reduced by 65% at the same message size as ResNet-50’s gradients (97.5 MB) [3]. The present evaluation is a proof of concept of large-message Allreduce at wire speed for distributed DL.

View and Download Qlogic SilverStorm 9024 supplementary manual online. Qlogic SilverStorm 9024: Supplementary Guide. SilverStorm 9024 Switch pdf manual download. Also for: Silverstorm 9080. Dell PowerEdge 1955 compute nodes which all connect to a core communications. rack. The InfiniBand interconnect consists of 585 QLogic InfiniPath QLE7140 • High-performance computing is fast computing – Computations in parallel over lots of compute elements (CPU, GPU) – Very fast network to connect between the compute elements • Hardware – Computer Architecture • Vector Computers, MPP, SMP, Distributed Systems, Clusters – Network Connections • InfiniBand, Ethernet, Proprietary

IntelВ® Enterprise Edition for Lustre* Software 2 Conclusion Lustre is capable of handling extremely large amounts of data and huge numbers of files shared concurrently across clustered servers. Storage powered by Lustre software is a breakthrough technology for addressing the exascale and emerging high-performance data analytics challenge. HIGH PERFOMANCE COMPUTING SUPER COMPUTING BASED ON GPU COMPANY PROFILE Sistemas InformГЎticos Europeos Ltd. was born in 1990 as a company dedicated to networking and communications. In 1999, the company specialized in scientific calculus based on solutions of stand- InfiniBand HCAs or GPUs OFED (Only for Infiniband networks)

HIGH PERFOMANCE COMPUTING SUPER COMPUTING BASED ON GPU COMPANY PROFILE Sistemas InformГЎticos Europeos Ltd. was born in 1990 as a company dedicated to networking and communications. In 1999, the company specialized in scientific calculus based on solutions of stand- InfiniBand HCAs or GPUs OFED (Only for Infiniband networks) Green computing, green ICT as per International Federation of Global & Green ICT "IFGICT", green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.. The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy efficiency during the product's lifetime, the recyclability or biodegradability

Green computing, green ICT as per International Federation of Global & Green ICT "IFGICT", green IT, or ICT sustainability, is the study and practice of environmentally sustainable computing or IT.. The goals of green computing are similar to green chemistry: reduce the use of hazardous materials, maximize energy efficiency during the product's lifetime, the recyclability or biodegradability Infiniband architecture (IBA) defines a switched communications fabric, allowing many devices to communicate concurrently with high bandwidth and low latency in a protected, remotely managed environment. A node can employ multiple paths through the IBA fabric. IBA hardware off-loads from the CPU much of the I/O communications operation.

have enabled InfiniBand to succeed as the industry standard for server to server communications, also benefit server to storage communications. Even more importantly, InfiniBand has the bandwidth, features, and scalability to enable the same interconnect to serve both purposes. The Barcelona Supercomputing Center is located at the Technical University of Catalonia and was established in 2005. The center operates the Tier-0 11.1 petaflops MareNostrum supercomputer and other supercomputing facilities. This centre manages the Red EspaГ±ola de SupercomputaciГіn (RES). The BSC is a hosting member of the Partnership for Advanced Computing in Europe (PRACE) HPC initiative.

AOC-UIBQ-m1 Single-port InfiniBand QDR UIO Adapter Card with PCI-E 2.0 and Virtual Protocol Interconnect в„ў (VPI) AOC-UIBQ-m1 InfiniBand card with Virtual Protocol Interconnect (VPI) provides the highest performing and most flexible interconnect solution for performance-driven Wednesday, August 7, 2013 - 10AM-11AM PST Accelerating High Performance Computing with GPUDirect RDMA

Summit or OLCF-4 is a supercomputer developed by IBM for use at Oak Ridge National Laboratory, which as of November 2018 was the fastest supercomputer in the world, capable of 200 petaFLOPS. Its current LINPACK benchmark is clocked at 148.6 petaFLOPS. As of November 2018, the supercomputer is also the 3rd most energy efficient in the world with a measured power efficiency of 14.668 gigaFLOPS/watt. A self-adaptive communication protocol for High performance computing over Myrinet cluster Presented by: Laure ABDALLAH physical layer allows currently the use of Ethernet and Infiniband networks. and to reduce time to market. So Super- computers were introduced, and …

–HPC:High-Performance Computing. Building a virtual super computer from a large cluster of commodity servers. – Multi-Fabric Input/Output (MFIO): The use of SFS products to interconnect InfiniBand and Ethernet and/or Fibre Channel networks. An InfiniBand deployment that includes at least one SFS I/O chassis and gateway module. 6/30/2012 · Industry standard defined by the InfiniBand Trade Association • Originated in 1999 InfiniBand™ specification defines an input/output architecture used to interconnect servers, communications infrastructure equipment, storage and embedded systems InfiniBand is a pervasive, low-latency, high-bandwidth interconnect

High Performance Computing and Master Data Management 4 • Thrive in the Collaborative Enterprise: Collaborate virtually, incorporating flexible team structures and investments that yield lasting savings and a strategic advantage. While this is just a short-list of imperatives facing corporations and governmental institutions today, clearly, there View and Download Qlogic SilverStorm 9024 supplementary manual online. Qlogic SilverStorm 9024: Supplementary Guide. SilverStorm 9024 Switch pdf manual download. Also for: Silverstorm 9080. Dell PowerEdge 1955 compute nodes which all connect to a core communications. rack. The InfiniBand interconnect consists of 585 QLogic InfiniPath QLE7140

Name Badges & Name Tags. We offer a variety of Name Badge styles. Click on any of the buttons above to see examples and get further details. Or read the summaries below to determine which of our badges will best suit your needs. Badge sample format Wellington May 07, 2015 · Photo ID Badge Sample Template. Business May 7, 2015 February 1, 2019 Kate badges template, company photo id badges, id badges, id badges template, photo id badge, photo id badges. Photo ID Badges. Most of the companies today utilize photo ID badges for security reasons. Not only do these badges provide you with a genuine identity inside the